So a client pinged me a few weeks ago. Their AWS bill had quietly crept up - not dramatically, not "CFO-calling-you-at-home" levels, but enough that someone finally opened Cost Explorer and started asking uncomfortable questions. The culprit? An aws cdk s3 eventbridge lambda trigger pipeline that nobody had properly cost-modelled before shipping to production. Everything worked. The architecture was actually pretty solid. But three cost drivers were running hot, and two of them were completely invisible until the bill arrived.

That conversation turned into this post.

Who This Cost Teardown Is For

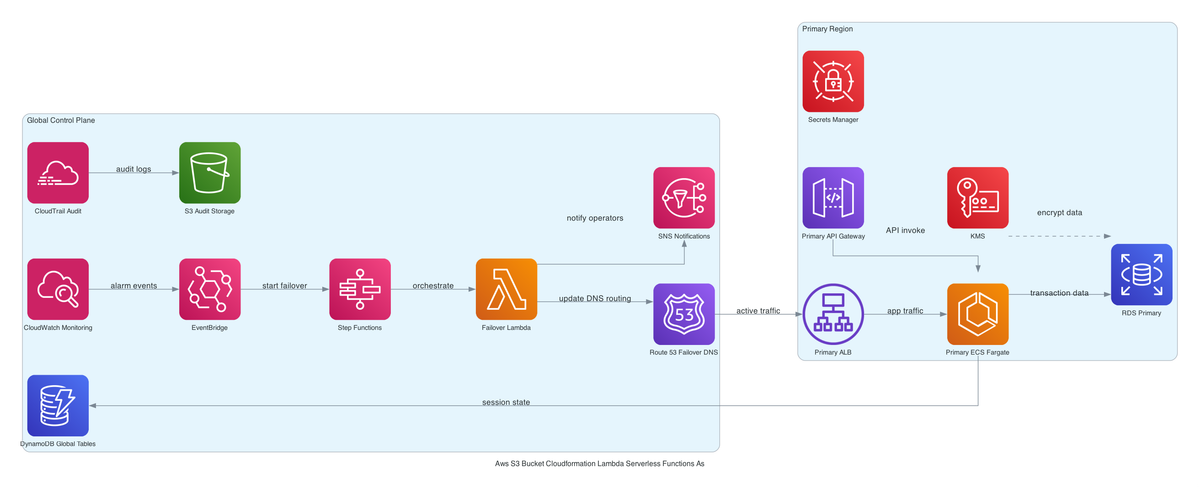

If you're running - or planning - an S3-triggered serverless workflow in CDK TypeScript where Lambda needs to be VPC-placed, encrypted with KMS, and auditable, this is for you. Specifically, if you've wired up CloudTrail data events feeding into EventBridge to trigger Lambda (instead of the legacy direct S3 notification path), the cost model is genuinely non-obvious and most existing posts don't touch it.

You should be comfortable reading CDK stacks and have a rough sense of how IAM, VPC endpoints, and KMS work. I'm not going to explain what a Lambda function is.

Cost Problem and Context

OK so here's the situation. You chose - correctly, I'd argue - to route S3 object events through CloudTrail data events into EventBridge rather than using direct S3 event notifications. The reasons are good: richer event filtering, full audit record of every object operation, ability to fan out to multiple targets without touching the bucket config. [editorial]

But this decision has three separate cost implications that compound in ways nobody models upfront:

- CloudTrail data events aren't free. $0.10 per 100,000 events.

- VPC endpoints for S3, Secrets Manager, and SSM - your Lambda needs all three to operate privately - cost roughly $7/month per endpoint per AZ. [inferred]

- KMS API calls across every service layer (bucket encryption, Lambda environment variables, CloudWatch Logs) add up at $0.03 per 10,000 requests.

In isolation, none of these are scary. Together, at scale, they can make your Lambda execution cost look like a rounding error.

A bucket receiving 10 million PUTs per day? That's $30/day just in CloudTrail data event charges. $900/month. For audit logging. Meanwhile your Lambda is probably running for $4/month. The tail is wagging the dog, right?

Where Spend Is Coming From

Let me walk through each layer because the surprise is usually in the order of magnitude, not the existence of the cost.

CloudTrail data events are the big one. Management events are included in the free tier for the first trail, but data events - which is what you need to capture S3 object-level activity (PutObject, GetObject, DeleteObject) - are priced per event. This blew my mind when I first modelled it properly. If you're processing user-uploaded files, batch imports, or any kind of high-throughput pipeline, model this first. 1 million PUTs/day = $3/day = ~$90/month just for the trail. 10 million puts it at $900/month. [inferred]

VPC endpoints are the sneaky one. The aws cdk vpc endpoint cost breakdown explains this in detail, but the short version: each interface endpoint is ~$7.30/month/AZ for the endpoint hour cost, plus data processing charges. If you're deploying to 3 AZs (as you should for production), that's ~$22/month per endpoint. You need at least S3, Secrets Manager, and SSM for a Lambda that reads secrets and parameters privately. That's $66/month minimum, before data charges. Teams consistently undercount this because the cost shows up under "VPC" not "Lambda." Classic AWS. [inferred]

KMS API calls hit every read and write path. S3 decrypts objects on GET, encrypts on PUT. Lambda decrypts environment variables on every cold start. CloudWatch Logs encrypts every log batch. At low throughput this is $2-3/month and you don't care. At high throughput - say, a Lambda invoked 5 million times a month - you're actually looking at a meaningful KMS line item, especially if you're not caching the data key. $0.03 per 10,000 calls sounds cheap until you do the math: 5 million Lambda invocations with 3 KMS calls each = 15 million calls = $45/month. (I learned this the hard way on a Friday deploy, don't ask.) [inferred]

CloudWatch Logs without explicit retention is the classic silent cost. This stack sets retention explicitly [from-code], which is correct hygiene - see cloudwatch log retention cost control - but if anyone recreates the Lambda manually outside CDK, the new log group defaults to no expiry and the cost control breaks silently. [inferred]

Seriously.

What Can Safely Change

Not everything here is load-bearing. Some of these costs can be trimmed without touching the core design.

CloudTrail - filter your event selectors. You know how most buckets have a mix of prefixes doing different things? You don't need to capture every S3 data event if your Lambda only cares about PutObject on a specific prefix. Scope the trail's data event selector to the exact prefix (s3://your-bucket/incoming/). This alone can cut CloudTrail costs by 60-80% on buckets with mixed workloads. [inferred]

KMS - consider per-service keys with longer key caching. Using a single CMK for everything is operationally simple but means every service generates KMS API calls to the same key. Splitting into per-service keys doesn't reduce call volume, but it lets you tune cache TTLs per workload. Lambda in particular can benefit from increasing the data key cache duration if you're not rotating secrets frequently. [inferred]

VPC endpoints - right-size by AZ. If your Lambda only runs in 2 AZs (a calculated risk some teams take for non-critical workloads), you save ~$22/month per endpoint. For dev/staging environments, consider using a NAT Gateway instead of individual endpoints - the math actually changes quite a bit at low traffic volumes. [inferred]

CloudWatch Logs - set retention aggressively for non-compliance workloads. 7 days is often enough for operational debugging. 90 days if you need it for security review. The default "never expire" is almost never the right answer.

Alternatives Considered

The eventbridge vs sqs for s3 fan-out question comes up every time I look at this pattern. Here's the honest breakdown:

Direct S3 event notification to Lambda - lower latency (sub-second), zero CloudTrail cost for the eventing layer, simpler setup. But you can only have one SQS queue, one SNS topic, or one Lambda function as a direct target per event type per bucket. Fan-out requires SNS in the middle. And you lose the audit trail entirely. For anything beyond a single-consumer toy project, the limitations surface fast. [editorial]

S3 → SQS → Lambda - OK so this is actually a solid pattern for high-throughput workloads that don't need EventBridge's filtering richness, and honestly I think it's underrated. SQS gives you batching, dead-letter queues, and visibility timeout semantics. The trade-off is you lose the EventBridge rule-based routing and you need to handle S3 notification fan-out differently. But if real-time audit isn't a requirement, I'd seriously consider this over the CloudTrail path for cost-sensitive pipelines. The ops story is simpler, the cost is more predictable, and the DLQ behaviour alone has saved me from more than a few incidents. I did not see this coming when I first started comparing these patterns - SQS looks "old" next to EventBridge but it's genuinely the right call in a lot of situations.

S3 Batch Operations - completely different use case but worth mentioning: if you're processing existing objects rather than reacting to new uploads, S3 Batch Operations is dramatically cheaper than a continuous event pipeline. $0.25 per job + $1 per million object operations.

The CloudTrail+EventBridge path wins when you need: audit records, multi-consumer fan-out, and EventBridge's pattern matching on event metadata. It loses when you need: low latency, simple single-consumer setup, or cost predictability at high PUT volumes. [editorial]

Savings Estimate and Assumptions

These are rough numbers for a medium-traffic production workload. Your mileage varies.

| Scenario | Monthly CloudTrail | Monthly VPC Endpoints | Monthly KMS | Total |

|---|---|---|---|---|

| 100k PUTs/day, 2 AZ, filtered selectors | ~$3 | ~$44 | ~$2 | ~$49 |

| 1M PUTs/day, 3 AZ, full data events | ~$30 | ~$66 | ~$15 | ~$111 |

| 10M PUTs/day, 3 AZ, full data events | ~$300 | ~$66 | ~$45 | ~$411 |

Lambda execution cost at 1M invocations/month (512MB, 500ms avg): ~$5. At 10M: ~$50.

And here's the kicker: at scale, your event pipeline infrastructure costs more than your compute. Model CloudTrail first, not last.

Filtering CloudTrail to a specific prefix (saving ~70% of events at 1M PUTs/day): saves ~$21/month. Dropping from 3 AZ to 2 AZ on VPC endpoints (for non-critical workloads): saves ~$22/month. Neither of these breaks anything architecturally.

Operational Trade-Offs

A few things I want to flag that don't show up in cost calculators.

The latency trade-off is real. CloudTrail data events through EventBridge add 1-3 seconds end-to-end compared to direct notification. [inferred] For an audit pipeline processing compliance records, this is completely fine. For a user-facing feature that shows a "processing" spinner, it's probably not. Be honest about which one you're building, right?

The lambda cold start in vpc fix is a real thing - ENI attachment on cold start adds latency. Provisioned Concurrency helps but costs money. If your Lambda is invoked infrequently (hourly batch jobs, low-traffic buckets), VPC placement might not be worth the complexity.

If the CloudTrail trail goes dark - trail disabled, destination bucket ACL changes, something breaks in the audit log path - EventBridge stops receiving S3 events. Silently. There's no dead-letter queue on this path and no native alerting on event delivery failures in this stack. [inferred] You won't know until someone notices Lambda hasn't run in 4 hours. (I learned this at the worst possible time.) Set a CloudWatch alarm on EventBridge FailedInvocations and separately monitor that the CloudTrail trail is active.

Not ideal.

KMS key deletion is the nuclear option nobody thinks about until it happens. If the CMK gets deleted or the key policy drifts, S3 can't decrypt objects, Lambda can't read environment variables, and CloudWatch Logs can't write - all simultaneously, no graceful degradation. [inferred] Tag your KMS keys, set key deletion windows to the maximum (30 days), and test your kms key policy least privilege setup in staging before it surprises you in prod.

Risks of Over-Optimization

I've seen teams swing too hard the other way and regret it.

Switching from CloudTrail+EventBridge back to direct S3 notifications to save $30/month is a legitimate choice, but only if you genuinely don't need the audit trail. The moment a compliance requirement lands - GDPR deletion verification, SOC2, a data breach inquiry - you'll wish you had the CloudTrail record. Don't cut the audit trail for cost reasons without a documented decision.

Removing VPC placement to avoid endpoint costs is also tempting. But if your Lambda is reading from Secrets Manager and SSM, running it outside VPC means those credentials transit the public internet (even over TLS). Some security postures require private network access. Know whether yours is one of them before removing the VPC.

Switching to a single shared KMS key to reduce complexity is fine until the key policy needs to grant access to a new service and you have to audit every policy that touches that key simultaneously.

Recommendation

Start with CloudTrail event selector scoping. It's a one-line CDK change, it saves real money at scale, and it has zero operational risk. Filter to the prefix and object operations your Lambda actually needs. This is actually the easiest win on this whole list.

Then model your VPC endpoint count against your AZ requirements. If this is a non-customer-facing pipeline, 2 AZs might be acceptable and saves ~$22/month per endpoint type.

Hold off on KMS changes until you've measured actual call volume in CloudWatch. The math is scary in theory but often benign in practice for moderate-throughput workloads. Optimize it when you have real data, not upfront.

Don't remove the CloudTrail trail to save money unless you have a documented alternative for object-level audit. The EventBridge routing and fan-out capability it buys you is worth the cost at most scales - the optimization is in scoping it, not eliminating it.

Next Step

free: Diagram your current event path against the cost model above. Identify whether your CloudTrail data event selectors are scoped to a specific prefix or capturing everything. Check your VPC endpoint count against actual AZ usage. These three checks cost you nothing and surface most of the savings opportunity.

paid: Full CDK stack walkthrough including IAM least-privilege policies, VPC endpoint configuration in TypeScript, KMS key policy design that doesn't brick the stack on drift, per-volume cost model for CloudTrail data events vs Lambda execution across five S3 PUT volume scenarios, what exactly breaks when the CloudTrail trail goes dark and how to detect it, and a side-by-side comparison of all four alternatives (direct S3 notification, SQS fan-out, EventBridge, S3 Batch Operations) with when to use each.

If you'd rather just talk through the architecture for your specific setup - drop a note. I'm usually pretty quick to reply when the problem is interesting.