Building a Real-Time Change Data Capture Pipeline with AWS DMS, Kafka, and Kinesis

Production-ready AWS CDC template using DMS, Kafka, and Kinesis for real-time data streaming. Terraform-based, scalable, fault-tolerant, and built for high-availability workloads. Ideal for reliable, repeatable, enterprise-grade data pipelines.

The Problem

Modern applications need to react to data changes in real time.

Think about it. Your database just got updated. Now you need to:

- Replicate that change to another database

- Send events to downstream systems

- Update search indexes

- Trigger business logic in other services

- Maintain audit logs

You could poll the database every few seconds. But that's slow and wasteful.

You could write application code to publish events after every write. But that's error-prone. What if the write succeeds but the event publish fails?

What you really need is Change Data Capture. CDC reads database transaction logs and streams changes in real time. No polling. No dual writes. Just reliable change propagation.

The challenge? Building a production-ready CDC pipeline that's scalable, resilient, and actually works.

What We Built

This project is a production-ready infrastructure template for Change Data Capture streaming on AWS.

It integrates AWS Database Migration Service (DMS) for reading database change logs, Apache Kafka for event streaming at scale, and Amazon Kinesis for managed stream processing. The infrastructure is defined as Terraform code, so you can deploy it repeatably across environments.

The system captures database changes, transforms them into events, and streams them to consumers in real time. It's built for high availability, automatic scaling, and fault tolerance.

This isn't a toy example. It's designed for production workloads.

Architecture Overview

The architecture follows a layered approach with clear separation of concerns.

Core Components

Edge Layer

API Gateway and CloudFront sit at the edge. They handle incoming requests and route them to the appropriate services. AWS WAF protects against common web exploits.

Compute Layer

We use a mix of Lambda functions for event-driven workloads, ECS Fargate for containerized services, and EC2 Auto Scaling Groups for compute-intensive tasks. Each has its place.

Lambda is great for bursty, stateless operations. ECS handles long-running services. EC2 gives you control when you need specific instance types or configurations.

Data Layer

RDS/Aurora for relational data. DynamoDB for key-value lookups. S3 for object storage. ElastiCache (Redis) for caching and session state.

The data layer is where CDC happens. DMS watches the RDS transaction logs and captures every INSERT, UPDATE, and DELETE.

Streaming Layer (not shown in generic diagrams, but core to this project)

This is where the magic happens:

- DMS captures changes from the source database

- Changes are pushed to Kafka topics for durable streaming

- Kinesis processes and transforms events

- Consumers read from Kafka/Kinesis to react to changes

Observability Stack

CloudWatch collects metrics and logs. X-Ray provides distributed tracing across services. SNS sends alerts when things go wrong.

Data Flow

Here's how a typical request flows through the system:

- User sends an API request

- API Gateway validates and authenticates the request

- Request hits the compute layer (Lambda/ECS/EC2)

- Compute checks the cache first (ElastiCache)

- On cache miss, query the database

- Return response to user

- Meanwhile, any database write triggers DMS

- DMS captures the change and publishes to Kafka

- Downstream consumers process the event

- Metrics and logs flow to CloudWatch

The system uses caching aggressively to reduce database load. Cache invalidation happens via CDC events.

Design Tradeoffs

Multi-AZ Deployment

Everything runs across multiple availability zones. This costs more but gives us 99.99% availability. The alternative is cheaper single-AZ deployment with higher downtime risk.

Managed vs Self-Managed

We use managed services (RDS, DMS, Kinesis) instead of self-hosting (EC2 databases, custom CDC code, self-managed Kafka). Managed costs more per unit but reduces operational burden significantly.

Event Schema Evolution

We don't enforce strict schemas in this template. You'll need to add schema registry (like AWS Glue Schema Registry) for production use. This keeps the template simple but requires additional setup.

Deployment Flow

The deployment follows a progressive delivery model:

- Local Development - Write Terraform code locally

- CI/CD Pipeline - Push to GitHub triggers automated builds

- Build & Test - Compile, package, run unit and integration tests

- Staging Environment - Deploy to pre-production for validation

- Canary Deployment - Route 10% of production traffic to new version

- Health Checks - Monitor error rates, latency, and custom metrics

- Progressive Rollout - If healthy, increase to 50%, then 100%

- Auto Rollback - Any health check failure triggers automatic rollback

This minimizes deployment risk. You never deploy directly to 100% of production.

Network Architecture

The VPC is segmented into three subnet types:

Public Subnets (10.0.1.0/24, 10.0.2.0/24)

These host NAT Gateways and have direct internet access via Internet Gateway. Load balancers also sit here.

Private Subnets (10.0.11.0/24, 10.0.12.0/24)

Application servers run here. They can reach the internet via NAT Gateway but cannot be reached from the internet. This is where your compute actually lives.

Database Subnets (10.0.21.0/24, 10.0.22.0/24)

These are completely isolated. No direct internet access at all. Only application servers in private subnets can connect here.

Each subnet type spans two availability zones for redundancy. If one AZ fails, traffic automatically shifts to the other.

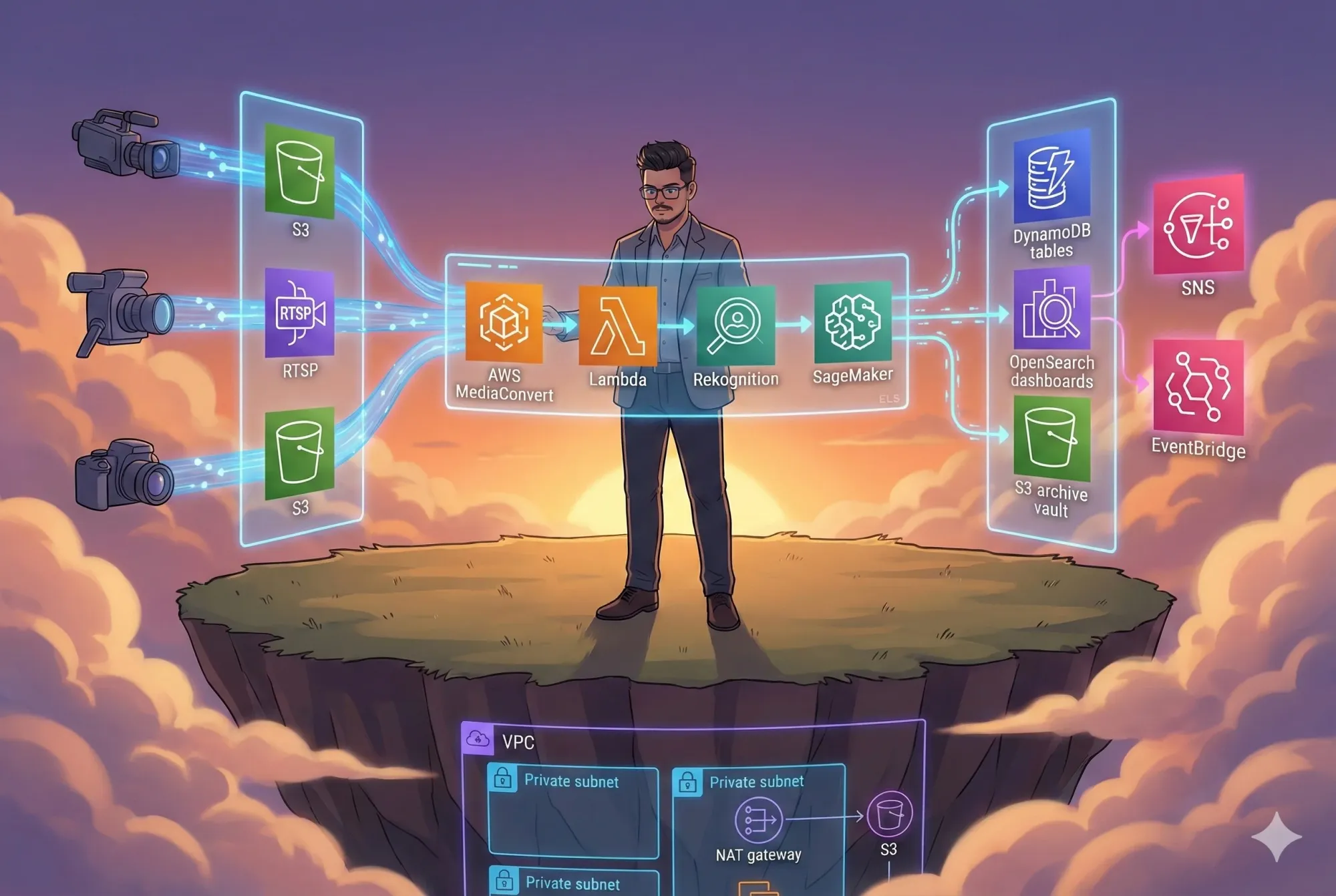

System Architecture Diagram

Microservices Architecture Diagram

Use Case Diagram

Sequence Diagram

Component Diagram

Deployment Diagram

Layered Architecture Diagram

Client–Server Diagram

Cloud Architecture Diagram (AWS)

User Flow Diagram

How It Works

Let me walk you through the major components in detail.

Database Change Data Capture

AWS DMS is the heart of the CDC pipeline. It connects to your RDS instance and reads the binary logs (binlog for MySQL, WAL for PostgreSQL).

When a row changes, DMS captures:

- The change type (INSERT, UPDATE, DELETE)

- The before and after values

- Timestamp and transaction metadata

- Primary key for identification

DMS then publishes this change event to a target. In this architecture, targets include:

- Apache Kafka topics

- Amazon Kinesis streams

- S3 for archival

- Another database for replication

The key advantage: DMS reads from transaction logs, not from application code. This means:

- No application changes required

- Zero performance impact on writes

- Guaranteed consistency with the database

Event Streaming with Kafka

Kafka acts as the durable event log. Events flow into Kafka topics partitioned by some key (usually the database table name or entity ID).

Why Kafka?

- High throughput (millions of events per second)

- Durable storage (events are persisted to disk)

- Multiple consumers (many services can read the same events)

- Replay capability (consumers can reread old events)

You can run Kafka on AWS in two ways:

- Amazon MSK (Managed Streaming for Kafka) - fully managed

- Self-hosted on EC2 - more control, more operational burden

For production, I recommend MSK. Let AWS handle cluster management, patching, and scaling.

Stream Processing with Kinesis

Amazon Kinesis complements Kafka for real-time processing. While Kafka is great for durable event storage, Kinesis excels at stream analytics.

Typical Kinesis use cases in this pipeline:

- Aggregating change events over time windows

- Filtering events based on criteria

- Enriching events with data from other sources

- Writing processed data to data lakes (S3) or warehouses (Redshift)

Kinesis integrates natively with other AWS services, making it easier to build serverless processing pipelines.

Multi-AZ High Availability

Everything in this architecture is deployed across multiple availability zones.

Compute Layer

Auto Scaling Groups span multiple AZs. If instances in one AZ fail, new instances spin up in another AZ automatically.

Data Layer

RDS uses Multi-AZ deployments with automatic failover. DynamoDB replicates across AZs by default.

Load Balancing

Application Load Balancers distribute traffic across AZs. Health checks remove unhealthy targets automatically.

The goal: survive entire AZ failures without manual intervention.

Monitoring and Observability

You can't fix what you can't see.

CloudWatch Dashboards show:

- Infrastructure metrics (CPU, memory, network, disk)

- Application metrics (request count, latency, error rate)

- Business metrics (events processed, replication lag)

CloudWatch Alarms trigger on:

- High CPU usage (>80% for 5 minutes)

- Error rate spikes (>1% of requests)

- Database connection failures

- Disk space warnings (>85% full)

X-Ray Distributed Tracing shows:

- End-to-end request flow across services

- Bottlenecks and slow operations

- Service dependency map

- Error traces with full context

When an alarm fires, SNS sends notifications via email, Slack, or PagerDuty.

Security Model

Security is built-in, not bolted on.

Encryption

- All data at rest is encrypted using AWS KMS

- All data in transit uses TLS 1.3

- Database backups are encrypted

- S3 buckets enforce encryption

Network Isolation

- Databases live in private subnets with no internet access

- Security groups act as virtual firewalls

- VPC Flow Logs track all network traffic

- NAT Gateways are the only egress path for private resources

IAM Least Privilege

Every service gets its own IAM role with minimum necessary permissions. No shared credentials. No long-lived access keys.

Audit Logging

CloudTrail logs every API call. Who did what, when, from where. Immutable logs stored in S3.

WAF Protection

AWS WAF blocks common attacks (SQL injection, XSS, DDoS). Rules are automatically updated.

Build It Yourself

Let's deploy this infrastructure step by step.

Prerequisites

You need:

- AWS CLI version 2.13.0 or later

- Terraform version 1.5.0 or later

- An AWS account with administrative access

- Basic understanding of AWS networking and Terraform

Install requirements:

# Check AWS CLI version

aws --version

# Configure AWS credentials

aws configure

# Install Terraform (macOS)

brew tap hashicorp/tap

brew install hashicorp/tap/terraform

# Verify Terraform installation

terraform version

Environment Setup

Clone the repository:

git clone https://github.com/rahulladumor/change-data-capture-streaming.git

cd change-data-capture-streaming

Create a terraform.tfvars file (don't commit this to git):

region = "us-east-1"

environment = "dev"

project_name = "change-data-capture-streaming"

The project uses these variables:

region- AWS region for deploymentenvironment- Environment name (dev, staging, prod)project_name- Project identifier for resource tagging

Configuration

The Terraform configuration is minimal by design. It's a template you'll extend.

main.tf sets up the Terraform provider and AWS region. This is where you'll add your infrastructure resources:

- VPC and subnets

- DMS replication instances and tasks

- Kafka (MSK) clusters

- Kinesis streams

- RDS instances

- Security groups

variables.tf defines input variables with sensible defaults.

outputs.tf exports values you'll need later (endpoint URLs, resource IDs).

Deployment Steps

Initialize Terraform:

terraform init

This downloads provider plugins and sets up the backend.

Preview changes:

terraform plan

Review the plan carefully. Terraform shows exactly what it will create, modify, or destroy.

Deploy to development:

terraform apply -var-file=dev.tfvars

Terraform will ask for confirmation. Type yes to proceed.

Deployment takes 10-15 minutes for a full stack (RDS, DMS, MSK, networking).

Cloud Permissions

The Terraform execution role needs extensive permissions to create infrastructure. At minimum:

- VPC and networking (EC2:*)

- Database services (RDS:, DMS:)

- Streaming services (Kafka:, Kinesis:)

- IAM role creation

- CloudWatch logging

For production, use a dedicated CI/CD role with these permissions. Never use root credentials.

Common Mistakes and Fixes

Error: "InvalidSubnet: The subnet must contain at least 2 availability zones"

Fix: Make sure you're creating subnets in at least two AZs. Check your VPC configuration.

Error: "DMS replication instance already exists"

Fix: DMS instance names must be globally unique in your account. Change the instance name or delete the old instance.

Error: "Insufficient capacity"

Fix: AWS occasionally runs out of capacity for specific instance types in specific AZs. Try a different instance type or region.

Kafka connection timeouts

Fix: Check security group rules. Your consumer must be able to reach Kafka brokers on port 9092 (plaintext) or 9094 (TLS).

DMS lag is high

Fix: Increase DMS instance size or add more table parallelism in the task settings.

Key Code Sections

Let me highlight the important parts of the infrastructure code.

Terraform Provider Configuration

File: main.tf (lines 1-13)

terraform {

required_version = ">= 1.5.0"

required_providers {

aws = {

source = "hashicorp/aws"

version = "~> 5.0"

}

}

}

provider "aws" {

region = var.region

}

This pins Terraform to version 1.5+ and AWS provider to version 5.x. Version pinning prevents unexpected breaking changes during deployments.

The region parameter is configurable via variables, making it easy to deploy across regions.

Variable Definitions

File: variables.tf

variable "region" {

description = "AWS region"

type = string

default = "us-east-1"

}

variable "environment" {

description = "Environment name"

type = string

default = "dev"

}

variable "project_name" {

description = "Project name"

type = string

}

These variables control where and how the infrastructure deploys. The project_name has no default - you must provide it explicitly. This prevents accidental deployments.

Outputs

File: outputs.tf

output "project_name" {

description = "Project name"

value = var.project_name

}

As you build out the infrastructure, you'll add more outputs:

- DMS endpoint

- Kafka bootstrap servers

- Kinesis stream ARN

- Database connection strings

Outputs make it easy to reference resources across Terraform modules or in application configuration.

Where to Add Your Infrastructure

The current main.tf is intentionally minimal. Here's what you'll add:

VPC and Networking

module "vpc" {

source = "terraform-aws-modules/vpc/aws"

name = "${var.project_name}-vpc"

cidr = "10.0.0.0/16"

azs = ["us-east-1a", "us-east-1b"]

public_subnets = ["10.0.1.0/24", "10.0.2.0/24"]

private_subnets = ["10.0.11.0/24", "10.0.12.0/24"]

database_subnets = ["10.0.21.0/24", "10.0.22.0/24"]

enable_nat_gateway = true

enable_dns_hostnames = true

tags = {

Environment = var.environment

Project = var.project_name

}

}

DMS Replication Instance

resource "aws_dms_replication_instance" "main" {

replication_instance_id = "${var.project_name}-dms"

replication_instance_class = "dms.t3.medium"

allocated_storage = 100

vpc_security_group_ids = [aws_security_group.dms.id]

replication_subnet_group_id = aws_dms_replication_subnet_group.main.id

publicly_accessible = false

multi_az = true

tags = {

Name = "${var.project_name}-dms-instance"

}

}

MSK Kafka Cluster

resource "aws_msk_cluster" "main" {

cluster_name = "${var.project_name}-kafka"

kafka_version = "3.5.1"

number_of_broker_nodes = 3

broker_node_group_info {

instance_type = "kafka.m5.large"

client_subnets = module.vpc.private_subnets

security_groups = [aws_security_group.kafka.id]

storage_info {

ebs_storage_info {

volume_size = 1000

}

}

}

encryption_info {

encryption_in_transit {

client_broker = "TLS"

in_cluster = true

}

}

}

These are production-ready configurations with:

- Multi-AZ deployment for high availability

- Encryption in transit and at rest

- Proper network isolation

- Resource tagging for cost tracking

Running in Production

Deploying is just the start. Here's what running this in production looks like.

Logging

All services send logs to CloudWatch Logs.

DMS Logs

DMS logs show:

- Replication task start/stop events

- Table load progress

- CDC lag metrics

- Errors and warnings

Check DMS logs when replication is slow or failing.

Kafka/MSK Logs

MSK publishes broker logs to CloudWatch. Look for:

- Under-replicated partitions

- Broker failures

- High request latency

- Consumer lag

Application Logs

Your services consuming from Kafka should log:

- Events processed

- Processing errors

- Event schema mismatches

- Downstream failures

Enable structured JSON logging for easier parsing and searching.

Monitoring

Key Metrics to Watch

For DMS:

CDCLatencySource- How far behind real-time is DMS reading?CDCLatencyTarget- How far behind is the target?FullLoadThroughputRowsSource- Rows per second during full loadReplicationTaskStatus- Is the task running?

For MSK/Kafka:

BytesInPerSecandBytesOutPerSec- ThroughputMessagesInPerSec- Event rateUnderReplicatedPartitions- Data at riskOfflinePartitionsCount- Unavailable data

For Kinesis:

IncomingRecords- Events receivedGetRecords.Success- Consumer healthWriteProvisionedThroughputExceeded- ThrottlingReadProvisionedThroughputExceeded- Consumer throttling

Dashboard Setup

Create a CloudWatch dashboard with three sections:

- Infrastructure health (CPU, memory, network)

- Stream processing metrics (events/sec, lag)

- Business metrics (orders processed, users synced)

Refresh interval: 1 minute for production dashboards.

Deployment Verification

After deploying, verify the system is healthy:

# Check DMS replication task status

aws dms describe-replication-tasks \

--filters Name=replication-instance-arn,Values=<instance-arn>

# Check MSK cluster state

aws kafka describe-cluster --cluster-arn <cluster-arn>

# Check Kinesis stream status

aws kinesis describe-stream --stream-name <stream-name>

# Test database connectivity

psql -h <rds-endpoint> -U <username> -d <database> -c "SELECT 1"

All services should be in "running" or "active" state.

Debugging

DMS isn't capturing changes

Check:

- Binary logging is enabled on source database

- DMS user has replication permissions

- Security groups allow DMS to reach database

- CloudWatch logs for error messages

Kafka consumers are lagging

Check:

- Consumer group has enough instances

- No slow consumers blocking the group

- Partition count matches consumer parallelism

- No network issues between consumers and brokers

High costs

Check:

- DMS instance size - can you downsize?

- MSK broker count and instance type

- Kinesis shard count - do you need all shards?

- Data transfer costs between AZs

- CloudWatch log retention - reduce if possible

Health Check Endpoints

Implement health checks for every service:

# Lambda health check

curl https://<api-gateway-url>/health

# ECS service health

curl http://<load-balancer-dns>/health

# Kafka producing test message

kafka-console-producer.sh --bootstrap-server <broker> \

--topic test-topic << EOF

{"message": "health check"}

EOF

Health checks should return 200 OK if the service is healthy and can reach dependencies.

Cost Analysis

Let's talk about what this actually costs.

Development Environment

For a small dev environment (low volume, single AZ):

Production Environment

For production (high volume, multi-AZ, redundancy):

These are estimates. Actual costs depend on:

- Data volume and throughput

- Number of databases replicated

- Retention period for events

- Cross-region data transfer

Most Expensive Components

MSK Kafka is usually the biggest cost driver. Managed Kafka isn't cheap. Each broker costs money, and you need at least 3 for production (one per AZ for redundancy).

Alternatives:

- Self-host Kafka on EC2 (cheaper, more operational work)

- Use Kinesis instead of Kafka (different trade-offs)

- Use Amazon MQ (if you don't need Kafka specifically)

DMS Instance cost scales with data volume. If you're replicating terabytes daily, you need a large instance.

Optimization:

- Use multi-table tasks instead of one task per table

- Tune batch size and commit rate

- Use table filtering to replicate only needed tables

Data Transfer across AZs costs $0.01 per GB. This adds up fast with high throughput.

Optimization:

- Minimize cross-AZ traffic where possible

- Use VPC endpoints for AWS service calls

Cost Optimization Strategies

Right-size DMS instances

Start small and scale up based on CPUUtilization and SwapUsage metrics. Don't overprovision.

Use Savings Plans or Reserved Instances

If you know you'll run this for a year, commit to Reserved Instances for 30-40% savings.

Reduce log retention

Default CloudWatch log retention is "forever". Change it to 7 or 30 days for dev environments.

Compress Kafka messages

Enable compression (snappy or lz4) to reduce storage and transfer costs.

Auto-shutdown dev environments

Use Lambda + EventBridge to shut down dev infrastructure nights and weekends. Save 70% on dev costs.

Monitor with AWS Cost Explorer

Tag all resources with Environment and Project tags. Track costs by tag.

Final Thoughts

What We Built

This is a solid foundation for Change Data Capture streaming on AWS. The infrastructure handles:

- Real-time database change capture with DMS

- Durable event streaming with Kafka

- Stream processing with Kinesis

- High availability and fault tolerance

- Production-grade security and monitoring

It's designed to scale from small dev workloads to high-volume production systems.

Limitations

This is a template, not a complete solution.

You'll need to add:

- Actual CDC pipeline configuration (source/target endpoints)

- Schema registry for event format management

- Consumer applications to process events

- Data transformation logic

- Error handling and dead-letter queues

- Monitoring dashboards and alerts

- CI/CD pipeline for automated deployments

The Terraform code is intentionally minimal. It gives you the AWS provider setup and variable structure, but you provide the infrastructure resources.

No built-in schema evolution handling.

Data schemas change over time. You need a strategy for handling schema changes without breaking consumers. Consider AWS Glue Schema Registry or Confluent Schema Registry.

No state management across regions.

This deploys to a single region. For multi-region disaster recovery, you'll need additional configuration for cross-region replication and failover.

Lessons Learned

Start with managed services.

Self-hosting Kafka on EC2 seems cheaper initially. But operations costs (patching, scaling, monitoring failures) add up fast. Managed services cost more per hour but save engineer time.

Multi-AZ is worth it.

Single-AZ deployments are fragile. AZs fail more often than you think. The cost of multi-AZ is small compared to the cost of downtime.

Monitor CDC lag obsessively.

The most common production issue is replication lag. Set up alerts for CDCLatencySource > 60 seconds and investigate immediately.

Test failover regularly.

High availability only works if you test it. Schedule quarterly failover drills. Force an AZ failure and verify the system recovers.

When NOT to Use This Approach

Low-volume workloads

If you're replicating one small database with a few changes per minute, this is overkill. DMS + Lambda could be simpler and cheaper.

Real-time requirements < 1 second

DMS typically has 2-5 second latency. If you need sub-second replication, consider application-level dual writes or database-native replication.

Very high throughput (> 1M events/sec)

MSK can handle it, but costs get extreme. Consider Kinesis Data Streams with enhanced fan-out instead.

Non-AWS environments

This is AWS-specific. For multi-cloud CDC, look at Debezium + self-hosted Kafka or commercial tools like Striim.

Future Improvements

Here's what I'd add for production use:

Schema Registry

Integrate AWS Glue Schema Registry or Confluent Schema Registry to manage event schema versions.

Data Quality Monitoring

Add Great Expectations or AWS Deequ to validate data quality in the pipeline.

Cost Optimization

Implement auto-scaling for Kinesis shards and MSK storage. Add cost anomaly detection.

Multi-Region Replication

Extend to multi-region for disaster recovery and global low-latency access.

Event Enrichment

Add Lambda or Kinesis Analytics for real-time event enrichment before downstream consumption.

Terraform Modules

Break the monolithic Terraform into reusable modules (VPC, DMS, Kafka) for better organization.

About This Project

Author: Rahul Ladumor

Email: rahuldladumor@gmail.com

GitHub: @rahulladumor

LinkedIn: linkedin.com/in/rahulladumor

Portfolio: acloudwithrahul.in

Repository: github.com/rahulladumor/change-data-capture-streaming

License: MIT License

Version: 1.0.0

If this helped you, drop a star on GitHub. If you build something with it, I'd love to hear about it.